Machine Vision in Robotics – Intelligent Cobots using 3D Vision

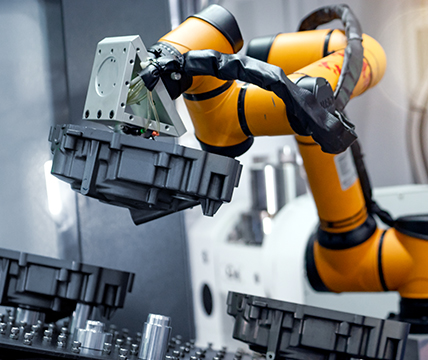

Collaboration between humans and robots is constantly growing. With a 3D vision-guided robotic system from LMI Technologies and Universal Robots, the STEMMER IMAGING experts enable the collaborative robots to perform demanding pick-and-place tasks. Using machine vision, they can grip objects and move them to a target point without any unwanted collisions – together with their human colleagues.

The task

Enable collaborative robots (cobots) to "see" with the help of a machine vision system to safely perform pick-and-place tasks in the shared "workspace" with humans.

The challenge

The cobots have to locate and visualise 3D objects in changing production environments, make control decisions based on this information and execute precision-based mechanical movements – safely and reliably without colliding with their human colleagues.

The solution

A sophisticated 3D vision system, which is perfectly adapted to the high-performance cobots from Universal Robots, allows direct interfacing of 3D snapshot sensors with robots with no additional software or PC required. The result is a powerful robotics solution that can perform the most complex pick-and-place tasks in automated assembly processes based on 3D data.

"The demands on the system's flexibility and safety are extremely high. On the one hand, the cobots must be able to identify parts randomly posed in three dimensions (i.e., X-Y-Z), and accurately discover each part’s 3D orientation. On the other hand, they must be able to act one hundred per cent safely in the shared workspace with humans without endangering people."

Peter Keppler, Senior Director International Sales Enablement, STEMMER IMAGING

Collaboration between robots and and humans in pick-and-place applications

The use of small to medium-sized collaborative robots for factory automation applications is growing at a rapid rate. Many of these applications are pick-and-place, so the robots require machine vision to visualise the scene, process information to make control decisions and execute precision-based mechanical movements.

Customised solution: Direct interfacing of smart 3D vision system with robots

2D-driven systems can only locate parts on a flat plane relative to the robot. Smart systems equipped with 3D vision and direct interfacing with collaborative robots can identify parts randomly posed in three dimensions (i.e., X-Y-Z), and accurately discover each part’s 3D orientation. This is a key capability for effective robotic pick-and-place.

The robotic system – Highly precise and efficient

The highly efficient robotic system is based on 3D vision components from LMI Technologies and cobots from Universal Robots (UR). LMI Technologies have developed a special plug-in, which allows direct interfacing of the Gocator 3D snapshot sensors with the robots from Universal Robots (UR). The 3D snapshot sensor can be connected to the robot via Ethernet using the Gocator URCap plugin. The Gocator's 3D coordinate system is then mapped directly into the robot’s coordinate system, making the 3D vision-guided robotic system simple and particularly efficient with no additional software or PC required.

Leading-edge 3D technology driving the success of machine vision in robotics

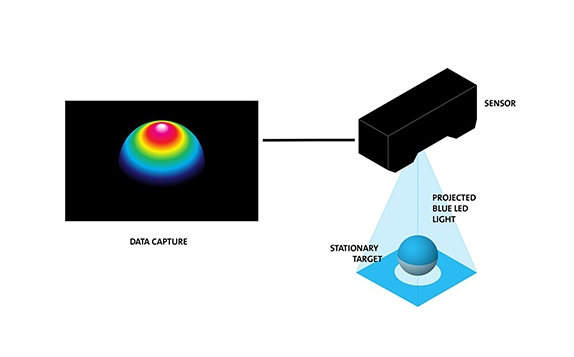

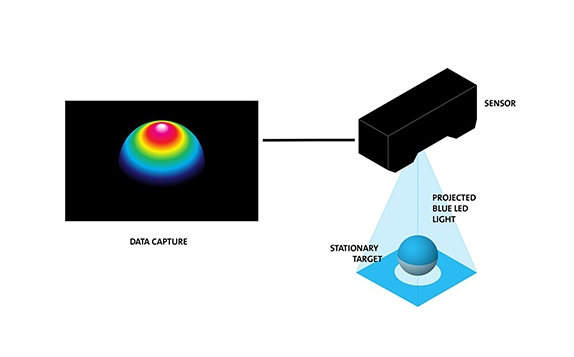

The Gocator snapshot sensors, used to deliver reliable 3D data, combine fringe projection using blue-LED structured light with a rich array of built-in 3D measurement tools and decision-making logic to scan and inspect any part feature with stop/go motion at speeds up to 6 kHz.

The blue LED projects one or more high-contrast light patterns onto the object to allow full-field 3D point cloud acquisition by the stereo cameras, providing excellent ambient light immunity, even in challenging conditions.

The solution's success factors

High-performance snapshot sensors

LMI's Gocator snapshot sensors provide reliable and repeatable data – thanks to high ambient light immunity even in challenging lighting conditions – and are capable of performing high-resolution measurements in non-contact online inspection applications.

Direct interfacing with robots

The 3D snapshot sensor can be connected directly to the robot over Ethernet using the Gocator URCap plugin. No extra software or PC is needed for implementation, making the whole system compact, simple and highly efficient.

Versatile application possibilities

Gocator tools such as "Bounding Box" and "Height or Part Matching" can be used to solve a wide variety of pick-and-place applications easily with no need for additional programming. Parts can be positioned systematically or randomly on moving conveyors, stacked bins, or pallets.

"There is a wide range of possible applications including pick-and-place of incoming raw materials or subassemblies travelling on a transport system such as conveyors or pallets, random placement and picking from a conveyor or placing finished products/assemblies into appropriate bins.”

Peter Keppler, Chief Marketing Officer (CMO) ,STEMMER IMAGING

More successful projects in factory automation

100% quality control in manual assembly

For automotive supplier Kautex Textron, STEMMER IMAGING and Envisage Systems developed an automated inspection solution that helped to significantly optimise the manual assembly processes. The comprehensive solution that fully integrated the vision system as part of the assembly process resulted in 87% reduction in the total number of assembly-related defects and ensured 100% quality control of all products.